Correctness Check:

Claim Verification and Error Discovery for Scientific Papers

CSPaper

A part of Scholar7 AB · March 2026

In academic peer review, AI can already help produce faster, more structured review comments. But an important question remains: can those judgments be grounded in verifiable evidence?

Correctness Check is our answer. It adds a verification layer to AI review by checking whether a paper's core claims are well supported, and by surfacing the places that deserve closer scrutiny.

Why Correctness Check matters more than ever

Academic peer review is under more pressure than ever before. Submission volume has surged dramatically. For example, the number of papers submitted to ICLR grew from 1,013 in 2018 to 19,814 in 2026, an increase of more than tenfold in less than a decade [1]. At the same time, reviewer attention remains extremely limited. Xi et al. note that the exponential growth of scientific output has made it increasingly difficult for reviewers to reliably detect errors, given the limited pool of human experts [2].

Meanwhile, findings from Bianchi et al. show that even papers published at top-tier conferences still contain a nontrivial number of objective errors, and that the average number of such errors per paper has been rising over time. At NeurIPS, for instance, the average increased from 3.8 in 2021 to 5.9 in 2025, an increase of 55.3% [3]. A paper often combines complex theoretical derivations, experimental designs, and citation networks. Objective errors can emerge in any of these parts, yet it is nearly impossible for human reviewers to thoroughly verify each one under severe time constraints. Shah also shows that expert reviewers may fail to detect major errors in a paper while still recommending acceptance [4].

This is exactly why Correctness Check matters. It adds a verification layer to peer review by prioritizing the claims, evidence, and potential errors in a paper that are most worthy of scrutiny, helping direct limited reviewer attention to the places where verification matters most.

ICLR submissions from 2018 to 2026, illustrating how fast evaluation demand is rising.

Increase in the average number of objective errors per paper from 2021 to 2025.

It does not try to judge everything, only what is most worth verifying

Correctness Check does not aim to judge whether a paper is generally good or bad, nor does it try to answer more subjective questions such as whether the work is interesting, important, elegantly written, or ultimately worthy of acceptance. Instead, it focuses on the questions where verification matters most.

1. Are the paper's claims actually supported?

This does not necessarily mean the paper contains an outright error. A claim may still need additional experiments, narrower wording, or a more careful definition of scope. For novelty claims such as "the first" or "novel," the system also checks whether the statement holds up against relevant prior work.

2. Are there objective, verifiable errors?

These issues include derivation errors, logical inconsistencies, discrepancies between experiments and conclusions, and other problems that would directly undermine the paper's credibility.

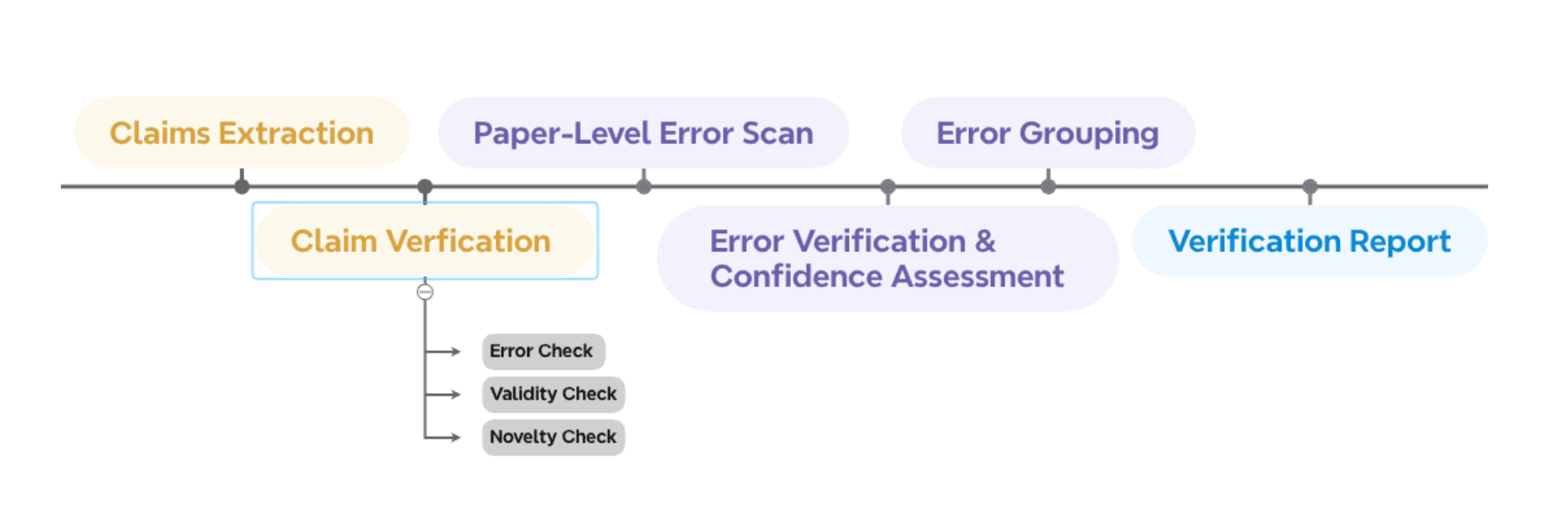

From paper to evidence: how Correctness Check works

Correctness Check is not a one-shot system that generates a final conclusion. It is a multi-stage, traceable, verification-centric workflow built to make intermediate states inspectable instead of hidden.

Core claims extraction

One of the most critical design choices is the strict separation of claim identification from verification. This step-by-step design makes the process more reliable and more traceable. After identifying core claims, we adopt the methodology of Metropolitansky and Larson [5] to perform minimal claim refinement based on context, such as replacing pronouns, thereby avoiding subject misidentification or semantic drift during later verification.

Three kinds of validation for each claim

Nontrivial error check: the focus is not on spelling or formatting, but on technical problems that genuinely affect whether the claim can stand, such as invalid formulas, broken reasoning chains, mismatches between results and conclusions, or assumptions that do not actually hold.

Evidence sufficiency check: even when no explicit error is found, a claim may still be weakly supported because of limited experimental coverage, incomplete baselines, or theoretical proofs that do not fully close the argument. In such cases, the claim is marked as unsupported and the evidence gaps are surfaced.

Novelty claim check: when the system detects language such as "first to propose," "first to prove," or "first to apply," it searches for relevant prior work and uses those results to assess whether the novelty claim should be examined more carefully.

Paper-level scan for potential errors

Beyond claim-centered verification, Correctness Check also runs a recall-first scan across the full paper. This stage aims to capture local issues that may not be directly tied to the selected core claims, but could still affect the paper's overall credibility.

Double-checking and confidence assessment

After collecting candidate error snippets, Correctness Check verifies them again to identify and remove false positives. At the same time, it assigns a confidence score to each suspected issue, which later supports ranking, aggregation, and presentation.

Grouping and aggregating potential errors

For the error snippets that still remain, the system performs semantic grouping, clustering together problems that are essentially about the same underlying issue. It then produces concise group-level summaries, sorts them by confidence, and forms the final list of findings presented to the reader.

Turning verification into a reader-friendly report

Finally, the pipeline organizes the intermediate states produced throughout the verification process into a report that is readable, traceable, and open to further inspection.

How to read a Correctness Check report

The output is not a long block of free-form text. Instead, it is organized into two parts that are much easier to read and act on.

Claim Validity and Evidence

This part asks one central question: do the paper's most important claims actually hold up? It explains whether each core claim has serious issues that weaken it, whether the evidence provided is sufficient, and where the main gaps are when support is incomplete. If the authors make novelty statements, this section also highlights whether those claims deserve closer scrutiny.

Findings Beyond the Claims

This part asks a different question: outside the core claims, what else in the paper is worth a closer look? These issues may not map directly to a main claim, but they can still affect the paper's credibility. The system provides the relevant snippet, location cues, a short explanation, and an approximate confidence level so the reader can focus quickly on what matters most.

How Correctness Check strengthens peer review

Correctness Check adopts a verification-first design principle, transforming the review task into a problem of evidence gathering [6]. That makes it especially useful for surfacing objective errors, weakly supported claims, missing evidence, and novelty risks that can be investigated systematically.

The system already offers a strong end-to-end structure, while also pointing toward important directions for future refinement. It still depends on the underlying model's domain capability, so highly specialized areas can remain difficult. And the novelty-claim check is still bounded by the coverage of external retrieval, which means relevant prior work can sometimes be missed.

Verification-first means the goal is not to imitate reviewer prose. The goal is to produce auditable signals that make human verification faster, sharper, and more targeted.

Who may benefit from Correctness Check?

The most natural use case is pre-submission self-checking. Before formally submitting a paper, authors can examine whether their core claims are actually supported well enough, where the evidence is still weak, which statements may be too strong, and which novelty claims should be checked more carefully or narrowed.

For reviewers, Correctness Check is best understood as a verification-first attention-routing tool. It works as a complement to review judgment, helping reviewers see more quickly which claims deserve the closest scrutiny, which passages may contain critical errors, and which novelty statements are worth checking more carefully.

For editors or platforms, Correctness Check can serve as an upstream quality-screening tool. Before a submission reaches human reviewers, the system can help identify which papers are likely to require more verification bandwidth, and which issues within them deserve the most attention.

Give it a try

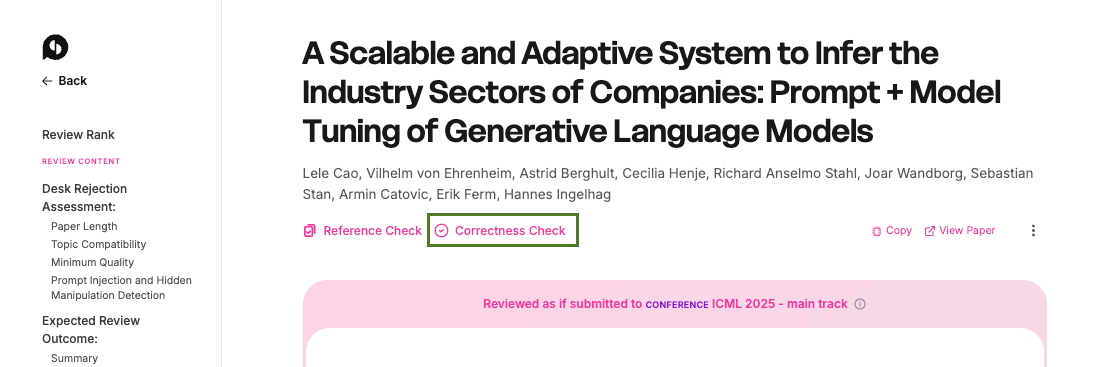

As described in the OpenPrint workflow, Correctness Check is one of the verification steps available during the broader OpenPrint process. It is also available directly from each review result page when a user wants to move from a general review view to a deeper investigation of claims, evidence, and possible errors.

In practice, that means users can encounter Correctness Check in two natural places: as part of the structured OpenPrint workflow, and as a follow-up action from a review result page when deeper verification is needed.

Try Correctness Check either through the OpenPrint workflow or from one of your existing review result pages.

References

[1]Paper Copilot. ICLR statistics. Retrieved March 23, 2026, from papercopilot.com/statistics/ICLR-statistics/

[2]Xi, S., Rao, V., Payan, J., & Shah, N. B. (2025). FLAWS: A benchmark for error identification and localization in scientific papers. arXiv preprint arXiv:2511.21843.

[3]Bianchi, F., Kwon, Y., Izzo, et al. (2025). To err is human: Systematic quantification of errors in published AI papers via LLM analysis. arXiv preprint arXiv:2512.05925.

[4]Shah, N. B. (2022). Challenges, experiments, and computational solutions in peer review. Communications of the ACM, 65(6), 76-87.

[5]Metropolitansky, D., & Larson, J. (2025). Towards effective extraction and evaluation of factual claims. arXiv preprint arXiv:2502.10855.

[6]You, L., Cao, L., & Gurevych, I. (2026). Preventing the collapse of peer review requires verification-first AI. arXiv preprint arXiv:2601.16909.