Reference Validity Check:

A Step Towards Verification-First AI for Paper Peer Review

CSPaper

A part of Scholar7 AB · March 2026

Citations are the lightest-weight evidence trail in a scientific paper, and also one of the easiest places for errors (or hallucinations) to hide. As claim production becomes cheaper while reviewer time stays fixed, scientific evaluation drifts toward proxy signals. Our recent work argues for verification-first AI: tools should expand verification capacity by producing auditable artifacts, not by imitating review prose. Today we ship a first concrete step: Reference Validity Check, an automated audit that resolves each bibliography entry to an external scholarly record (when possible) and surfaces a transparent, actionable report for authors, reviewers, and editors.

Why citation validity matters more than ever

If you have reviewed papers recently, you have likely felt the same friction: you click a citation because a claim sounds important, and the trail breaks. Sometimes the reference exists but the metadata is wrong (e.g., title/author mismatch). Sometimes the PDF is unreachable. Sometimes the cited work does not appear to exist at all. Individually these errors look small; collectively they create a new bottleneck: the inability to quickly verify the evidence trail of a paper.

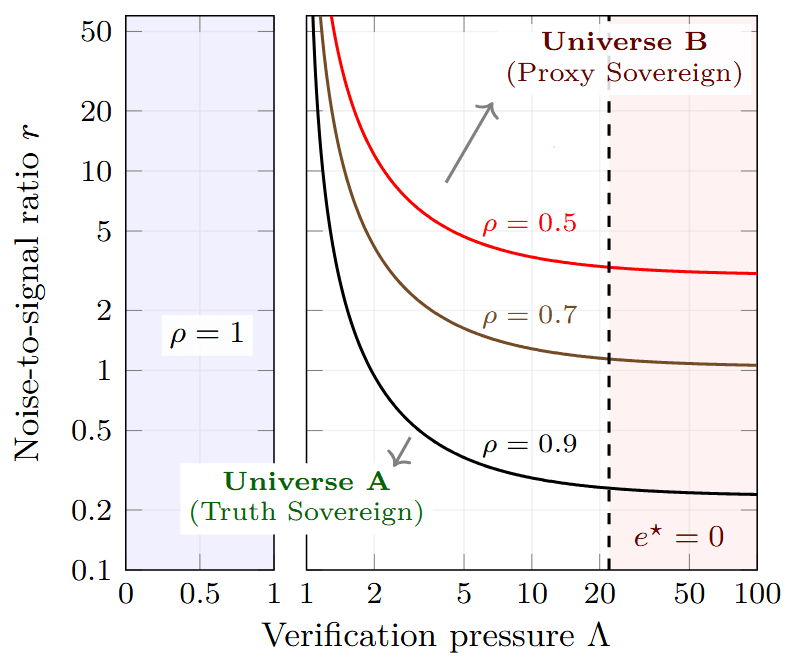

In our recent position [1], we model evaluation as a mixture of (1) occasional high-fidelity verification and (2) frequent reliance on low-cost proxies. We formalize the verification bottleneck via verification pressure:

where is the rate of incoming claims, is the effective cost of producing decisive evidence, and is the community's effective verification bandwidth. When grows, verification becomes selective and the system increasingly rewards proxy signals.

Citations are a proxy signal, and they get optimized

In a proxy-sovereign regime, communities must rely on low-cost signals that only weakly correlate with truth. Our paper highlights citation cascades as a concrete example of such a signal and analyzes citations as a proxy score in Appendix F [1].

This is not a moral claim about authors. It is an incentives claim: when verification is scarce, even well-intentioned evaluation can drift toward proxies. And once proxies influence careers, proxy optimization becomes rational behavior.

That is why citation integrity deserves special attention: citations are part of the evaluation substrate, not just formatting.

What "Reference Validity" means (and what it does not mean)

Reference validity is a narrow but high-leverage notion:

- We check: whether each bibliography entry can be resolved to a plausible, external scholarly record (e.g., a canonical metadata entry in a scholarly index), and whether the metadata match is strong enough to be confident.

- We do not check (yet): whether the cited paper actually supports the specific claim in your manuscript. That is a harder problem and requires claim-to-evidence mapping. We plan to release this function in a month.

This distinction matters. A verified reference means "we can locate it and match it." It does not mean "your use of it is correct".

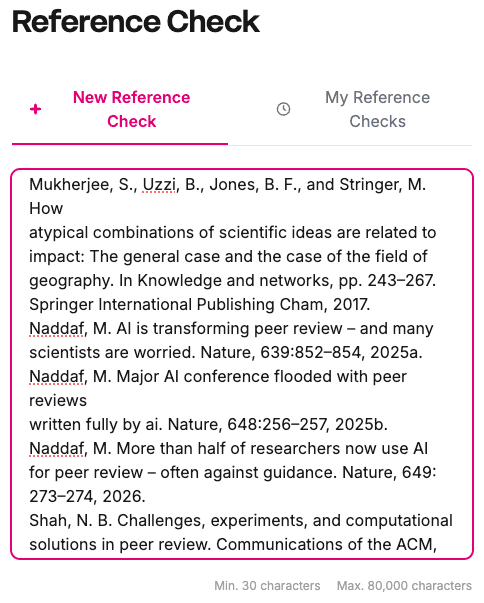

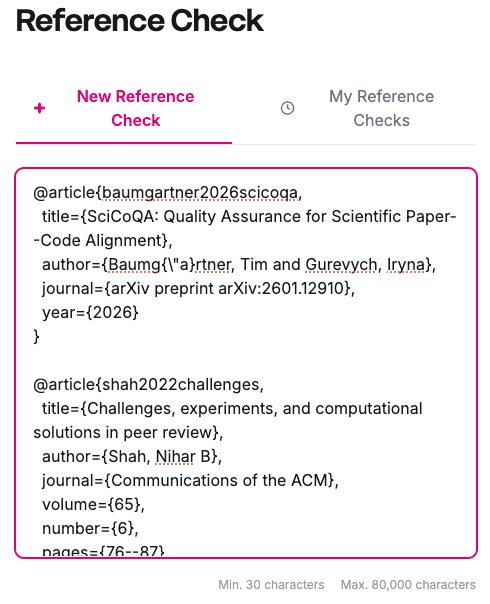

.bib file.Introducing our reference validity check

Reference Validity Check is a one-click audit over the entire bibliography of a paper submitted to our platform. Right now, you start with copy-pasting the reference texts (from PDF, Latex, or anywhere as long they contain your reference) into the text-box of the UI. Later, we will support triggerring this check directly from your paper review results.

Note: Do not worry about formatting inconsistencies or noisy content. Our pipeline automatically detects and extracts reference-like entries embedded in the pasted text. Moreover, you can revisit any historical checks in the "My Reference Checks" tab.

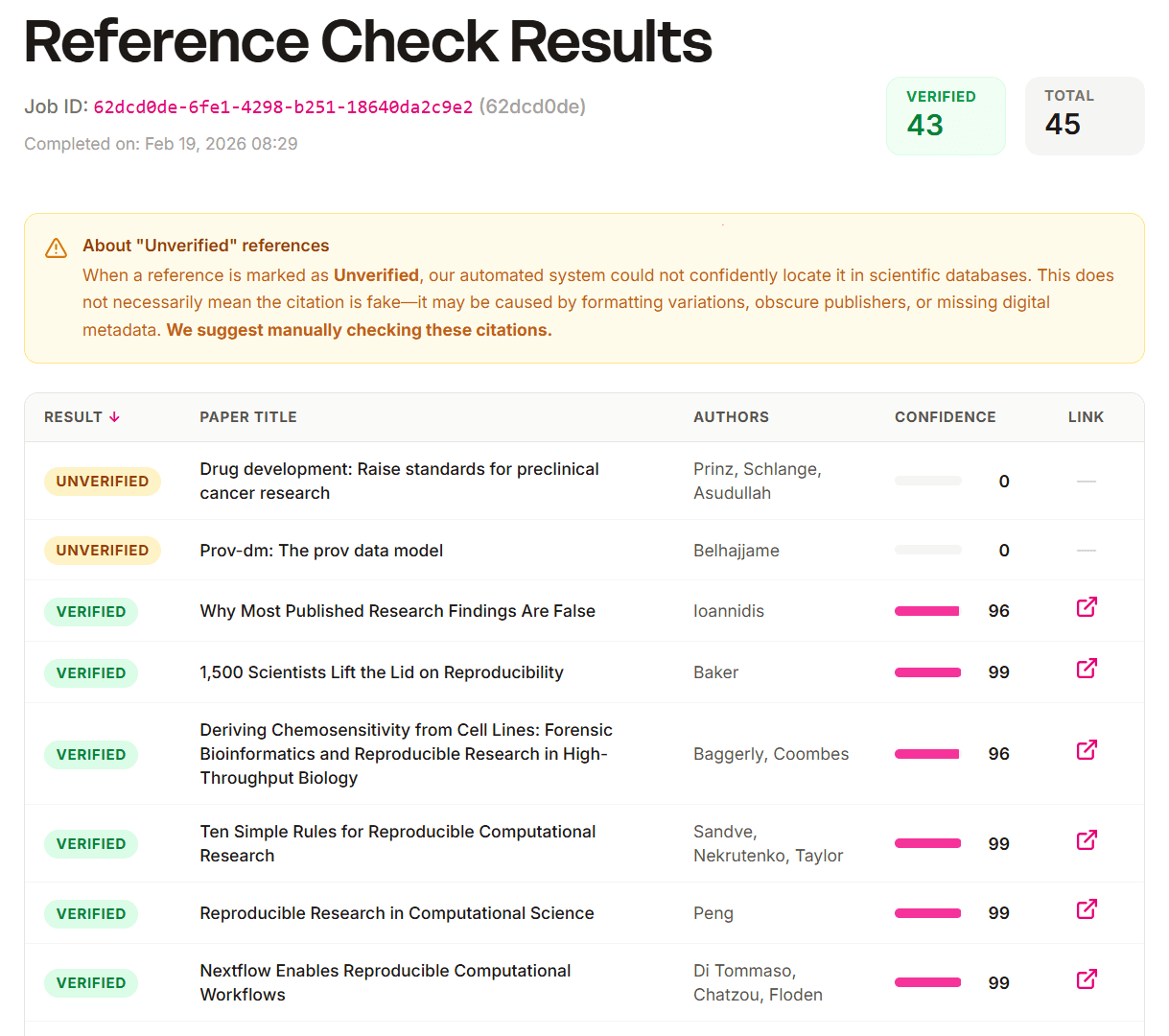

How to read the report

- Verified: we found a strong match and provide a direct external link for inspection.

- Confidence: an interpretable match score (e.g., agreement of title, authors, year, venue, identifiers). Higher means "less time for you to double-check."

- Unverified: we could not confidently resolve the entry. Importantly, Unverified does not mean fake. Common reasons include incomplete metadata, formatting variations, obscure venues, non-indexed items, or older sources.

A quick test on hallucitation dataset

We evaluated Reference Validity Check on 100 citation strings from the hallucinated papers described in [3] (an intentionally hard set with non-resolvable and metadata-corrupted references). The tool obtained a 93% detection rate of the problematic papers in that dataset.

Why this is verification-first (not verification theater)

Our paper warns that checklists can become a new proxy — verification theater — if they only reward appearances. A verification-first tool must keep outputs grounded in inspectable evidence. Reference Validity Check is designed around that constraint: it does not ask for justification paragraphs; it surfaces external records you can click and inspect.

Recommended workflows

For authors (pre-submission): run the check once. Fix obvious metadata errors. If an item is Unverified, add identifiers (DOI/arXiv ID), complete author/year/title fields, or verify the venue name.

For reviewers (triage): use the report as a targeted attention router. If a key claim depends on an Unverified reference, that is a strong signal to allocate a few minutes of manual checking.

For editors and venues: treat this as a lightweight auditability check — a stage-1 screen that verifies whether evidence trails are resolvable before scarce expert bandwidth is spent.

What comes next

Reference validity is only the first brick. Verification-first AI should expand bandwidth by producing auditable artifacts: claim-evidence maps, code/data consistency checks, reproducibility smoke tests, and more [1, 2]. We are building toward an ecosystem where verification is cheap enough to be routine.

Try it

Copy your references from your paper (PDF, Latex, or anywhere as long they contain your reference) and run Reference Validity Check. If you find systematic false negatives (e.g., a class of venues that are not resolvable), please send feedback — coverage and matching improve fastest when the community uses the tool.

References

[1]You, L., Cao, L., & Gurevych, I. (2026). Preventing the Collapse of Peer Review Requires Verification-First AI. arXiv preprint arXiv:2601.16909.

[2]Baumgärtner, T., & Gurevych, I. (2026). SciCoQA: Quality Assurance for Scientific Paper-Code Alignment. arXiv preprint arXiv:2601.12910.

[3]Sakai, Y., Kamigaito, H., & Watanabe, T. (2026). Hallucitation matters: Revealing the impact of hallucinated references with 300 hallucinated papers in ACL conferences. arXiv preprint arXiv:2601.18724.