🚨 AAAI 2023 Review Scandal: When Reviews Turn into a Thriller! 🚨

-

Imagine you're anonymously reviewing papers for AAAI, one of AI's premier conferences. Everything feels routine ... ... until suddenly, during the rebuttal phase, an author contacts you directly, pressuring you to improve their score. Wait a minute — how did they even know you're the reviewer?

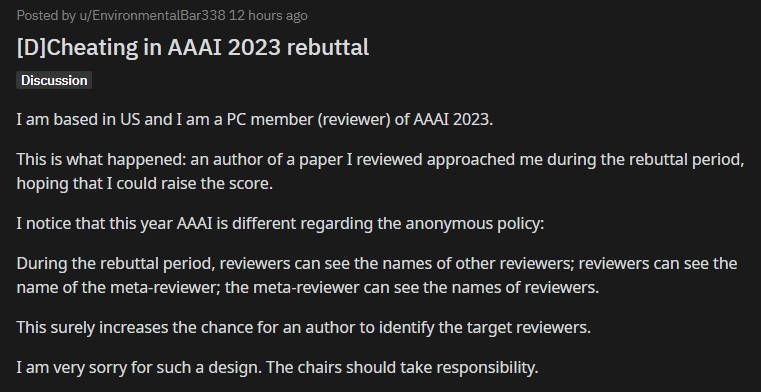

Turns out, this year AAAI had an unexpected twist: reviewers' identities were accidentally exposed to each other during rebuttals! Instead of staying anonymous, your fellow reviewers now know exactly who's evaluating the same papers. Because it’s impossible to rule out the possibility that other reviewers and paper authors may know each other, this kind of "transparency" provides authors with substantial room for cheating.

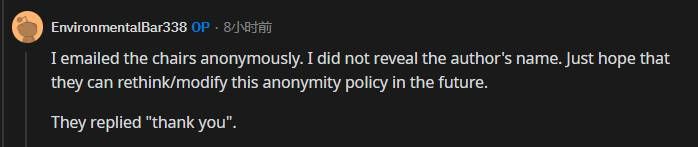

One anonymous reviewer bravely raised the alarm, alerting the conference chairs about this glaring flaw. The response? Just a simple "thank you."

The community exploded:

- Some called for immediate rejection of papers from authors engaging in such unethical behavior.

- Others pointed out the risks of favoritism, bias, and awkward professional dynamics.

Adding fuel to the fire, some authors reported bizarrely inconsistent reviews, unexpected late assignments of reviewers, and wild rumors surfaced about paid "strong accepts."

Clearly, something went seriously wrong at AAAI 2023.

What's at stake?

What's at stake?

Integrity in AI research depends heavily on peer review fairness. Incidents like these highlight the urgent need to rethink and reinforce conference review processes. Could community-driven platforms offering transparent yet anonymous discussions help avoid such pitfalls?Any comments from your about this accident? Welcome to add your opinion below.