Compositional Subspace Representation Fine-Tuning For Adaptive Large Language Models

-

AI Review for https://arxiv.org/abs/2503.10617v2

Summary

This paper introduces a new method, Compositional Subspace Representation Fine-tuning (CS-ReFT), designed to adapt large language models (LLMs) to multiple tasks while avoiding cross-task interference. The key innovation of CS-ReFT is its use of multiple orthonormal subspace transformations, each specialized for a distinct skill or task, and a lightweight router that composes these subspaces dynamically. The approach is evaluated on the AlpacaEval benchmark, where it demonstrates a high win rate compared to both larger models and other parameter-efficient fine-tuning methods.

Soundness

2

Presentation

3

Contribution

2

Strengths

- Novel Method: Introduces CS-ReFT to address cross-task interference in multi-task learning using multiple orthonormal subspace transformations and a lightweight router.

- Unique Approach: Distinguishes itself from traditional weight-based methods like LoRA by applying representation editing.

- Clear Structure: The paper is well-organized and clearly written, with a logical flow from the introduction to method, experiments, and conclusions.

- Visual Aids: Uses mathematical formulations and diagrams to effectively explain the subspace transformations and router mechanism.

Weaknesses

- Scalability Concerns: Does not sufficiently discuss how CS-ReFT scales, especially in scenarios with a large number of tasks, as maintaining separate low-rank transformations may become computationally and storage-intensive.

- Interpretability Issues: Lacks an analysis of the interpretability of the router's decisions. Insights into why specific weights are assigned to different subspaces could enhance trust in the model's behavior.

Questions

- Scalability: How does CS-ReFT perform as the number of tasks increases? Are there strategies to optimize or reduce the computational overhead of maintaining separate subspace transformations for each task?

- Router Interpretability: Can the router's decisions be interpreted or visualized to understand why specific subspaces are activated for certain inputs? Are there any patterns that emerge which could provide insights into the model's behavior?

Flag For Ethics Review

No ethics review needed.

Details Of Ethics Concerns

(None)

Rating

5

Confidence

4

-

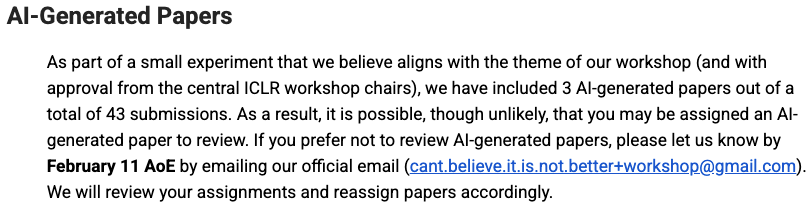

The ICBINB workshop webpage had a section about this - "AI-Generated Papers" in the reviewer guidelines

https://sites.google.com/view/icbinb-2025/reviewer-guidelines