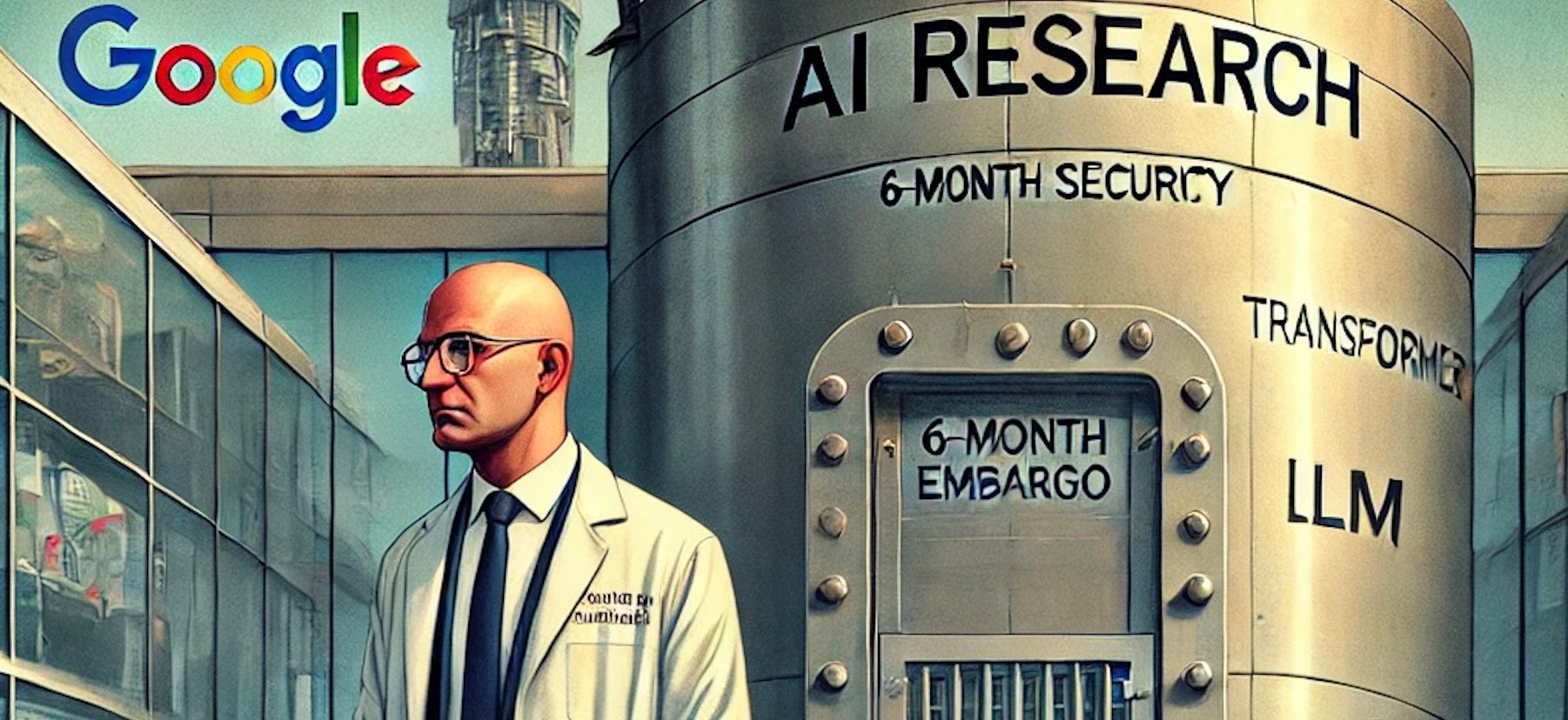

What DeepMind’s 6-Month Paper Embargo Tells Us About Big Tech’s AI Future

-

“This is a company, not a university campus. If you want the latter, you’re free to leave.”

— Demis Hassabis, CEO of Google DeepMind

Earlier this week, reports emerged that DeepMind, Google’s AI powerhouse, has implemented a six-month embargo on publishing its most strategic AI research, particularly work related to generative models like Gemini. This is no minor policy tweak. It’s a signal flare that Big Tech’s relationship with open science is changing

Why It Matters (even outside academia)

Traditionally, publishing peer-reviewed papers was a gold standard for measuring progress and credibility in AI. Google Brain and DeepMind helped usher in breakthroughs like the Transformer model with “Attention Is All You Need” (2017) that changed the entire trajectory of machine learning.

Now, we’re seeing a shift from “publish or perish” to “protect or perish”.

The Underlying Issue Isn’t Peer Review — It’s Control

This embargo is about centralizing control of research pipelines within corporations, making publishing conditional on strategic business alignment. According to internal sources, DeepMind researchers now need to “convince multiple stakeholders” just to publish a paper. Even security-related findings (e.g., LLM vulnerabilities) are reportedly being delayed or suppressed for fear of reputational damage or competitive exposure.

This has created what many insiders describe as an “academic winter”

within one of the world’s leading AI labs.

within one of the world’s leading AI labs.Implications for Google and Big Tech

The message is clear: Open science is no longer a given in corporate AI research. Here are three serious implications:

-

Innovation Bottlenecks: Researchers are leaving. High-profile scientists like Nicholas Carlini (an expert in AI safety and privacy) have departed, citing loss of academic freedom as a core reason.

-

Reputation Risks: Google once held the moral high ground in AI by championing openness. That legacy is now eroding, especially as rivals like OpenAI navigate a different path by strategically open-sourcing and commercializing at the same time.

-

Peer Review as a Casualty: With fewer top-tier papers emerging from leading labs, the entire ecosystem of AI conferences (NeurIPS, ICML, etc.) risks becoming disconnected from the actual cutting-edge.

Google’s Dilemma Is the Industry’s Dilemma

We’re witnessing the rise of the “AI Prisoner’s Dilemma”:

if every company hoards its findings, the entire field slows down. But if one publishes too much, it risks losing competitive edge.

Google’s shift toward secrecy is understandable from a business standpoint, especially in the heat of competition with OpenAI and Anthropic. But it may come at a long-term cost: less trust from the public, fewer breakthroughs in the open, and a generation of researchers disillusioned with the corporate-AI-academic-industrial complex.

What’s lost isn’t just papers — it’s purpose.

-