Paper: Mamba: Linear-Time Sequence Modeling with Selective State Spaces

Conference: ICLR 2024

Result: Reject

Mamba was an ambitious paper proposing a state-space model (SSM) architecture that scales linearly with sequence length and even claims to rival Transformers on language modeling. It generated a lot of buzz for its potential to handle long sequences efficiently. However, when it was submitted to ICLR 2024, the paper was rejected – a decision that surprised many in the research community. Let’s break down why the reviewers (and area chair) said "No", how the community reacted, and whether the critiques seem fair or overly harsh, all in an engaging, conversational look at this incident.

Official Criticisms from Reviewers and Area Chair

The ICLR reviewers and area chair raised several key criticisms of Mamba, mostly targeting its technical evaluation, clarity, and claimed novelty:

-

Baseline & Novelty Concerns: Reviewers felt that Mamba didn’t sufficiently compare against prior art. The paper follows a line of research on efficient sequence models (e.g. S4-diagonal, SGConv, MEGA, SPADE, and other near-linear transformers), so they expected comparisons to those existing models. In particular, one reviewer flagged the absence of a direct comparison to H3, a hybrid SSM/attention model that the authors cited as inspiration. Published results showed H3 achieving much better perplexities on The Pile benchmark, yet Mamba’s submission didn’t include H3 in its experiments. This omission made it hard for reviewers to judge if Mamba truly outperformed the best prior methods. In short, the novelty claim ("state-of-the-art across modalities") was undermined by the lack of head-to-head baseline results with all relevant models.

-

Experimental Evaluation (Technical Thoroughness): Several critiques focused on the evaluation metrics and benchmarks used. One glaring issue was the absence of Long Range Arena (LRA) results, which a reviewer noted is "the standard benchmark for long sequence understanding". Given Mamba’s focus on long sequences, not testing on LRA was seen as a major omission. Additionally, the paper’s results were largely centered on language modeling perplexity (how well the model predicts text), with relatively few downstream or task-specific evaluations. In fact, a reviewer remarked that the authors only provided zero-shot language modeling results, which was "rather limited" evidence of effectiveness – they specifically recommended adding a long-document task like document summarization (e.g. using arXiv papers with 8k+ tokens) to demonstrate Mamba’s utility on real long-context tasks. The heavy reliance on perplexity as the primary metric raised eyebrows, since it covers just one aspect of performance. In summary, the reviewers wanted to see Mamba prove itself on a broader range of benchmarks (both standard academic ones like LRA and more applied tasks) rather than only the chosen evaluations in the submission.

-

Clarity of Presentation: While not as dominant as the technical issues, there were also remarks about the paper’s clarity and organization. The "overarching narrative was slightly confused", as one observer put it. The flow of the paper and the motivation for each component didn’t land perfectly for everyone. One Reddit commenter (echoing what a reviewer might feel) said reading the paper "left me with a ton of questions along the lines of ‘What about performance on X task or Y benchmark?". This suggests that the exposition may not have clearly anticipated or answered important questions, making the contribution harder to assess. In a conference setting, lack of clarity can amplify doubts – if reviewers aren’t 100% sure they understand the method and its implications, they tend to be more critical. So any confusion in the writing/narrative likely didn’t help Mamba’s case.

-

Other Technical Issues: One reviewer flagged Mamba’s efficiency claims, noting that its "Speed and Memory Benchmarks" only reported speed, not memory usage, which is a key factor for long-sequence models. Another concern was that Mamba still requires quadratic memory during training, similar to Transformers, despite its linear-time inference, potentially limiting its advantage. Reviewers also questioned its length generalization — whether a model trained on short sequences (e.g., 1k tokens) could effectively handle much longer ones (e.g., 10k tokens), something some Transformer variants achieve with relative position embeddings. These gaps raised doubts about Mamba’s scalability and real-world applicability.

In the official meta-review and scores, it seems one reviewer in particular gave a very low score ("3: reject, not good enough") and strongly argued the above points. The area chair ultimately agreed with these critiques. Essentially, the verdict was that Mamba’s paper, as submitted, fell short on technical evaluation and clarity, despite its interesting ideas. The authors did try to address these issues in a revision (they even added the missing H3 comparisons, where Mamba actually came out ahead once tested, but it wasn’t enough to reverse the decision. The official stance was that the paper needed more work to meet ICLR’s bar.

It’s worth noting that the reviewers did recognize some positives – for example, they acknowledged the importance of efficient long-sequence modeling and found the idea of input-dependent state-space parameters intriguing. One can infer that at least some reviewers were impressed by the 5× speedup and the novel "associative scan" trick for fast training. However, these strengths were mentioned only in passing compared to the critiques, and ultimately the criticisms carried more weight.

Community Reactions: Agreement and Dissent

The rejection of Mamba sparked extensive discussion on social media and forums. Reactions from the research community were mixed – some aligned with the reviewers’ reasoning, while many others sharply diverged from it.

On one side, a number of researchers actually agreed (at least in part) with the official criticisms. For instance, the original poster in one Reddit discussion admitted that after reading the paper, they were "not particularly surprised" it got rejected. They felt that beyond some interesting hardware-aware tweaks, Mamba seemed "like it was a simple adaptation of a previous paper" and that the experiments were "not as extensive" as expected. This perspective basically echoes the novelty and thoroughness concerns raised by reviewers. Such folks argued that just because Mamba had Twitter hype doesn’t automatically guarantee a free pass at a conference – the paper still needed to tick certain boxes. In their eyes, the ICLR committee’s skepticism was justified, given the unanswered questions about baselines and performance on other benchmarks.

However, a much larger and louder contingent of the community was taken aback by the rejection – and many felt it was the wrong call. On Twitter (X), numerous prominent researchers voiced surprise. Perhaps the most notable reaction came from Sasha Rush, who tweeted "Mamba apparently was rejected!? ... Honestly I don’t even understand. If this gets rejected, what chance do us [small labs] have?". This sentiment ("if this got rejected, what can ever get in?") was shared by others who viewed Mamba as an exciting advance. The general feeling among these folks was bewilderment: Mamba had shown strong results (e.g. matching a Transformer twice its size, according to the preprint) and tackled a timely problem, so rejecting it felt puzzling if not outright unfair.

On Reddit, many commenters pushed back against the reviewers’ rationale. Some argued that Mamba "should have gotten in" and blamed the outcome on bad luck with one stringent reviewer and an area chair who "just ran with it". There was a sense that the paper might have been accepted if a different, more sympathetic committee had handled it. Others specifically disagreed with the insistence on certain benchmarks: for example, one discussion highlighted that the reviewers’ "final insistence on long range arena evaluation" was odd because Mamba had already demonstrated performance on tasks with far longer sequences (millions of tokens), making LRA seem like a dated, "relatively facile benchmark" in comparison. In short, many in the community felt the reviewers were "nitpicking" – focusing on somewhat formulaic requirements (like running a legacy benchmark or adding one more baseline) while downplaying the paper’s real innovations. As one frustrated commenter quipped, "they achieved linear-time sequence modeling that outperforms Transformer++… a goal dozens of labs have chased for years. If that’s not enough for an ICLR paper, then I think I’ll remove my ICLR publication from the web ’cuz I’m not worthy either.". That tongue-in-cheek remark captures the disbelief and concern that the bar was set extremely high for Mamba. Some even used clown emojis  to mock the decision, reflecting a broader frustration with the peer review process.

to mock the decision, reflecting a broader frustration with the peer review process.

In summary, the public opinion was split. A minority nodded along with the official reasons (lack of certain results, overhyped claims), but a majority seemed to think Mamba got a raw deal. This divergence between the official verdict and the community buzz made the case of Mamba’s rejection particularly noteworthy.

Were the Criticisms Reasonable or Overly Harsh?

Now comes the big question: looking at all these perspectives, were the reviewers being perfectly reasonable guardians of scientific rigor, or were they overly harsh on Mamba? The answer is a bit of both – let’s unpack it.

On one hand, the criticisms have merit in principle. It’s not outlandish for reviewers to expect a paper to include standard benchmarks and thorough comparisons. Missing an evaluation like LRA does leave a gap, since it’s a common yardstick to compare long-sequence models. Similarly, not including a baseline that is known to be strong (H3) or not testing an obvious use-case (long document summarization) are valid shortcomings. These are the kind of omissions that peer reviewers regularly point out, and normally authors address them in revisions. In Mamba’s case, the authors even did add some of those missing pieces in their rebuttal (they ran the H3 benchmark and showed improved results. So the content of the critiques wasn’t crazy: the reviewers were basically asking for more evidence to back up Mamba’s claims, which is a reasonable thing at a top conference.

On the other hand, many feel the bar was set unusually high for this paper, perhaps higher than necessary for a fair evaluation. The demands for certain benchmarks like LRA, for example, struck some as pedantic or dated. Mamba was tackling sequences far longer and more complex than those in LRA, so insisting on LRA (which deals with at most 4K token sequences and relatively simple tasks) might be applying a checkbox mentality rather than engaging with what the paper actually achieved. In that sense, the criticism could be seen as overly rigid: focusing on an older benchmark just because it’s conventional, rather than acknowledging that Mamba introduced its own, perhaps more relevant, evaluations. Likewise, the knock on using "only" perplexity could be viewed as a bit harsh – perplexity is a standard metric in language modeling, and Mamba did also report results on other modalities (like audio and genomics) in the paper, albeit less prominently. It’s arguable that the reviewers were technically correct in wanting more, but maybe didn’t fully appreciate what was already there. The clarity issues are hard to judge: if the writing truly confused multiple readers, that’s a fair reason to be cautious. But the core ideas weren’t unsound or anything – those could presumably be clarified in camera-ready.

Another aspect is how unforgiving the decision was. Many papers get conditional accepts or at least an encouragement to resubmit after adding experiments, whereas Mamba was flat-out rejected despite its revisions. This led some to feel the reviewers/AC were inflexible or perhaps "overly nitpicky." One Reddit commenter even speculated that Mamba "had really bad luck with the AC… with those reviews, it should have gotten in". This suggests that, in a different scenario, the same paper might have squeaked through. So, in hindsight, the criticisms were real and important, but maybe too heavy-handed in the final decision. The novel contributions (a new selective SSM mechanism, a custom CUDA-kernel-powered parallel scan for RNN training, impressive speedups) were substantial – arguably more so than many papers that did get accepted – so some feel the committee could have given Mamba the benefit of the doubt.

In the end, whether the rejection was "fair" is debatable. The critiques weren’t random – they pinpointed genuine areas for improvement. But given how excited a lot of researchers were about Mamba, there’s a sense that the paper was held to an exceptionally strict standard. Perhaps a middle ground view is that the reviewers were cautious but not crazy: they wanted a more polished and comprehensive submission. It’s just unfortunate (and a bit ironic) that a paper aiming to push the boundaries had its wings clipped by adherence to very conventional evaluation criteria.

It’s worth briefly acknowledging that Mamba did have strong points noted, even if they ultimately got overshadowed. Some reviewers and commenters were impressed by the paper’s innovations – for example, the idea of making state-space model parameters input-dependent (the "selective" part of Mamba) was seen as a clever way to enable content-based reasoning in an RNN-like model. The authors’ implementation of an associative scan algorithm (essentially a parallel prefix-sum trick) to train the model in linear time was praised as "fascinating and novel". The model demonstrated 5× faster inference throughput than Transformers and achieved lower perplexity than similarly-sized Transformers, which one commenter noted is a feat that "labs throughout the world have been tirelessly chasing for years". In evaluations, Mamba hit state-of-the-art results on certain long-context tasks, and one of the higher-scoring reviewers likely acknowledged these strengths. All told, the paper’s potential was clearly recognized.

Broader Implications

This whole Mamba saga has some interesting broader takeaways for sequence modeling research and the ML community at large. Firstly, it underscores just how high the bar is to challenge Transformers. Even when you have a model that, on paper, can outperform Transformers on some tasks with better scaling, the community (and conference reviewers) will demand very thorough proof. Mamba’s rejection sends a message: if you’re proposing a new architecture in this space, you’d better dot your i’s and cross your t’s in terms of evaluations. Established benchmarks (no matter how old) will likely need to be checked off to satisfy everyone. This might be frustrating (as the Mamba debate showed, some benchmarks might be outdated), but it reflects a cautious approach – extraordinary claims require solid evidence in all areas.

The incident also highlights a bit of a disconnect between hype and peer review. It’s a reminder that a paper can be widely discussed and admired online yet still get knocked back in formal review. In the long run, though, the community discussion can have positive effects. In Mamba’s case, the outcry and interest may well motivate the authors (and others in the field) to improve evaluation standards. We might see future sequence modeling papers include both new and old benchmarks to avoid a "Mamba situation." There’s also a chance that the collective criticism of the review process here (with researchers posting clown emojis and expressing confusion) could add to calls for improving how we review innovative papers – perhaps encouraging more open-mindedness or flexibility for unconventional but promising work.

For the subfield of state-space models and RNN variants, Mamba’s journey is not over. The authors are likely working on a revised version (they’ve already shown some fixes like the H3 comparison in the rebuttal). If Mamba (or a "Mamba v2") manages to address the concerns and get published, it could validate the approach and give a boost to non-transformer sequence models. As one blog noted, companies and researchers are probably already testing Mamba’s ideas at larger scales to see if the performance holds up (Mamba: The Easy Way). If it does, it could influence the design of future large language models, offering a path to more efficient long-context processing. In a broader sense, the rejection sparked such a big conversation that it actually drew more attention to the work. Inadvertently, the controversy put Mamba on more people’s radar, which might accelerate progress (the old "any publicity is good publicity" adage).

Finally, this incident might cause researchers to reflect on benchmarking and evaluation practices in sequence modeling. Should we continue relying on benchmarks like LRA? How do we balance demonstrating real-world impact (e.g. long document tasks) versus standardized tests? Mamba opened up those questions. Some feel that clinging to facile benchmarks can hold the field back, so perhaps the community will develop new, more relevant benchmarks for long-context models as a result.

In conclusion, the rejection of Mamba at ICLR 2024 was not just a single paper’s setback; it became a talking point that provided a multi-perspective lesson. The official reasons boiled down to "promising idea, but show us more," focusing on technical rigor, clarity, and completeness. The public reaction ranged from "the reviewers had a point" to "the reviewers missed the point", highlighting a healthy tension in how we assess new research. Were the critiques fair? In part yes, though many argue they were a bit too conservative. The silver lining is that Mamba’s ideas are now out in the open and being refined, and the whole episode could inspire better evaluations and perhaps more balanced reviewing of bold research. In the fast-evolving field of sequence modeling, the Mamba story is a reminder that innovation often comes hand-in-hand with controversy – and that the path to acceptance (both social and academic) may require not just a great idea, but also the right evidence presented in the right way.

Accepted

Accepted Rejected

Rejected Rejected

Rejected Accepted

Accepted Rejected

Rejected Accepted

Accepted Rejected

Rejected Rejected

Rejected Accepted

Accepted Rejected

Rejected Accepted

Accepted Rejected

RejectedNote: Data sourced from community discussions on Zhihu, Reddit, and OpenReview threads. Subject to sample bias.

5.0 "Award-worthy (top 2.5%)"

5.0 "Award-worthy (top 2.5%)" ️ 4.0 "ACL-worthy"

️ 4.0 "ACL-worthy" 3.5 "Borderline Conference"

3.5 "Borderline Conference" 3.0 "Findings-tier" (Translation: "We’ll take it… but hide it in the appendix")

3.0 "Findings-tier" (Translation: "We’ll take it… but hide it in the appendix") 1.0 "Do not resubmit" (a.k.a. "Burn this and start over")

1.0 "Do not resubmit" (a.k.a. "Burn this and start over") 5 cycles/year now (April’s cycle vanished; June moved to May).

5 cycles/year now (April’s cycle vanished; June moved to May). LLM stampede: *"8,000+ submissions per ARR cycle!

LLM stampede: *"8,000+ submissions per ARR cycle! Got a 3.0? Pray your meta-reviewer is merciful.

Got a 3.0? Pray your meta-reviewer is merciful. ARR loophole: "Score low in Feb? Resubmit to May ARR and aim for EMNLP."

ARR loophole: "Score low in Feb? Resubmit to May ARR and aim for EMNLP." Share your ACL 2025 horror stories below! Did you rebut or run?

Share your ACL 2025 horror stories below! Did you rebut or run?

This likely caused the four reviewers and AC to miss the similarity in methodology — which probably explains why MoA scored so highly (6,6,8,8).

This likely caused the four reviewers and AC to miss the similarity in methodology — which probably explains why MoA scored so highly (6,6,8,8). .

.

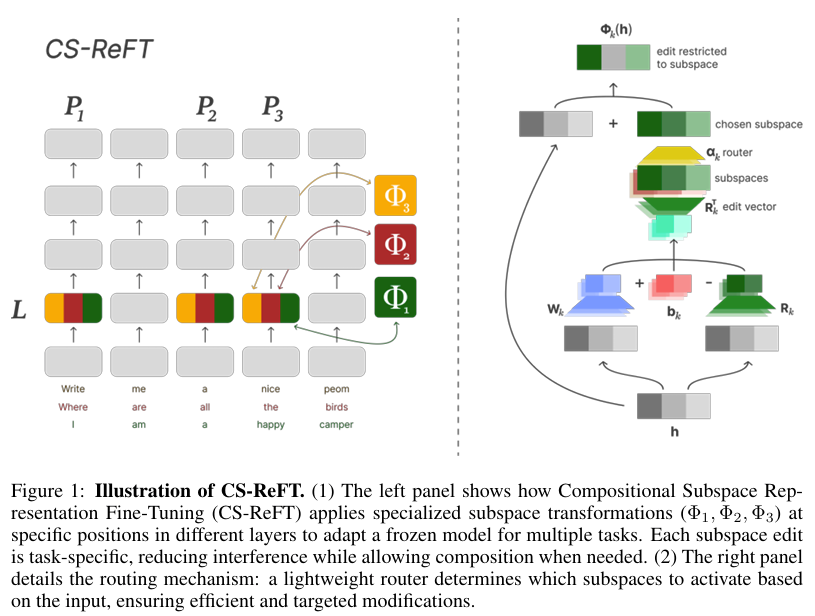

Both CS-ReFT and Siege paper highlight not just the capabilities of AI-driven research but also the ethical and practical dilemmas emerging from automated scientific exploration and discovery.

Both CS-ReFT and Siege paper highlight not just the capabilities of AI-driven research but also the ethical and practical dilemmas emerging from automated scientific exploration and discovery.

The papers are produced by Zochi created by Ron and Andy:

The papers are produced by Zochi created by Ron and Andy:

to mock the decision, reflecting a broader frustration with the peer review process.

to mock the decision, reflecting a broader frustration with the peer review process.