Shocking Cases, Reviewer Rants, Score Dramas, and the True Face of CV Top-tier Peer Review!

“Just got a small heart attack reading the title.”

— u/Intrepid-Essay-3283, Reddit

[image: giphy.gif]

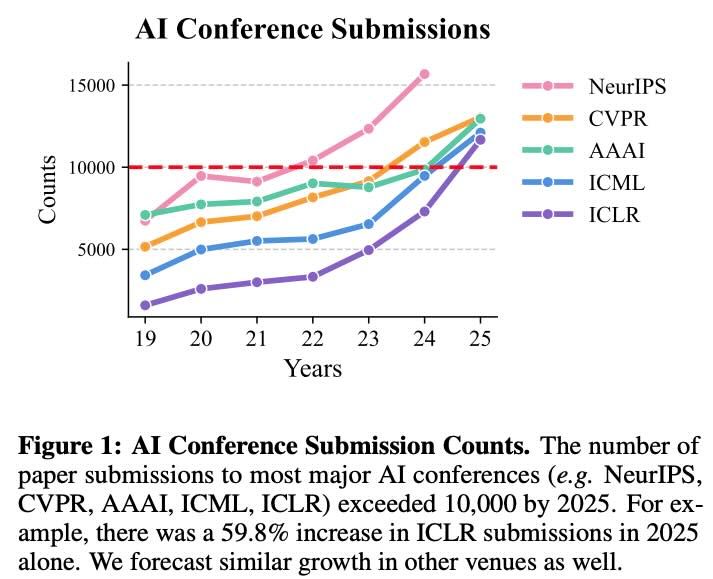

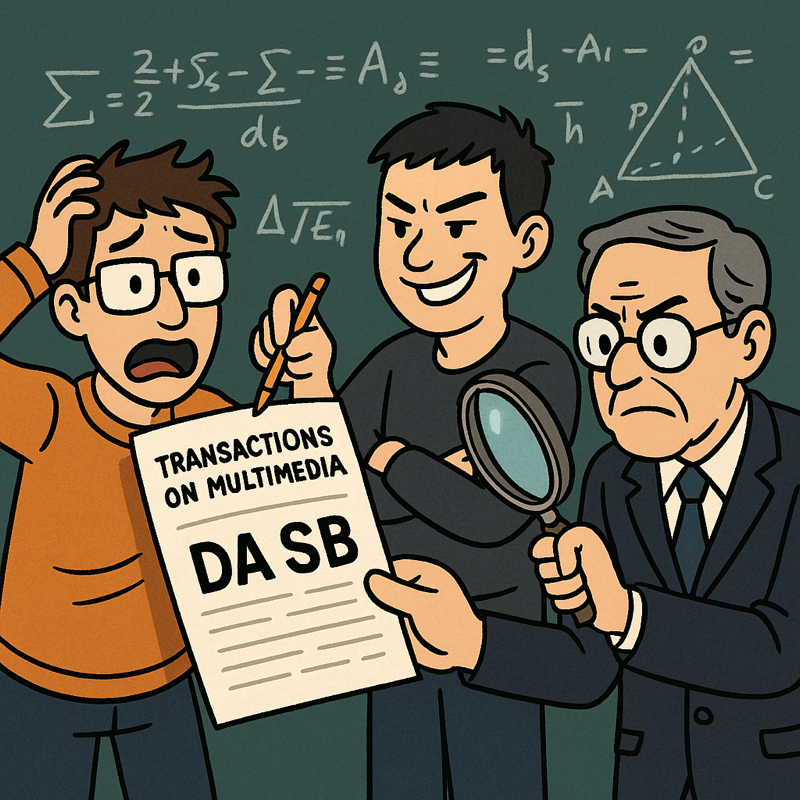

Introduction: ICCV 2025 — Not Just Another Year

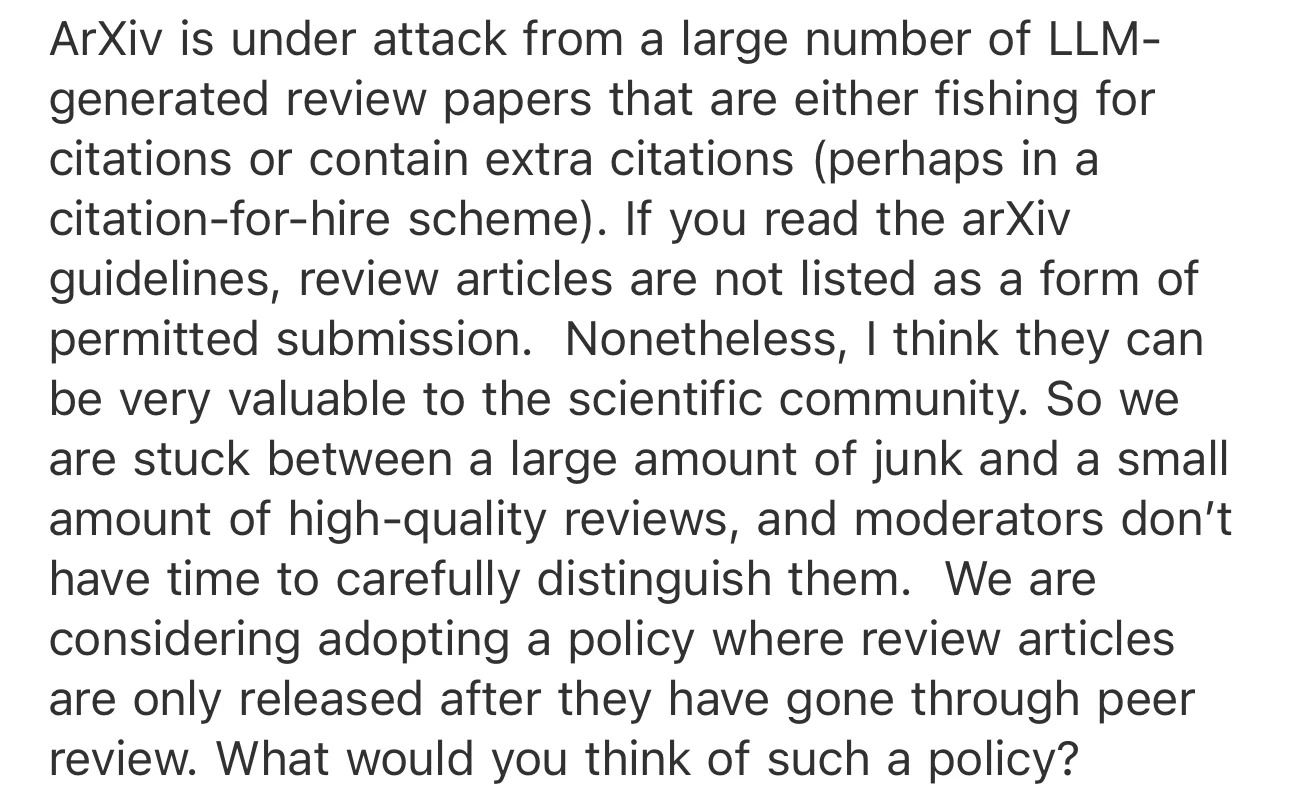

ICCV 2025 might have broken submission records (11,239 papers! 🤯), but what really set this year apart was the open outpouring of review experiences, drama, and critique across communities like Zhihu and Reddit. If you think peer review is just technical feedback, think again. This year, it was a social experiment in bias, randomness, AI-detection accusations, and — sometimes — rare acts of fairness.

Below, we dissect dozens of real cases reported by the community. Expect everything: miracle accepts, heartbreak rejections, reviewer bias, AC heroics, AI accusations, desk rejects, and score manipulation. Plus, we bring you the ultimate summary table — all real, all raw.

The Hall of Fame: ICCV 2025 Real Review Cases

Here’s a complete table of every community case reported above. Each row is a real story. Find your favorite drama!

#

Initial Score

Final Score

Rebuttal Effect

Decision

Reviewer/AC Notes / Notable Points

Source/Comment

1

4/4/2

5/4/4

+1, +2

Accept

AC sided with authors after strong rebuttal

Reddit, ElPelana

2

5/4/4

6/5/4

+1, +1

Reject

Meta-review agreed novelty, but blamed single baseline & "misleading" boldface

Reddit, Sufficient_Ad_4885

3

5/4/4

5/4/4

None

Reject

Several strong scores, still rejected

Reddit, kjunhot

4

5/5/3

6/5/4

+1, +2

Accept

"Should be good" - optimism confirmed!

Reddit, Friendly-Angle-5367

5

4/4/4

4/4/4

None

Accept

"Accept with scores of 4/4/4/4 lol"

Reddit, ParticularWork8424

6

5/5/4

6/5/4

+1

Accept

No info on spotlight/talk/poster

Reddit, Friendly-Angle-5367

7

4/3/2

4/3/3

+1

Accept

AC "saved" the paper!

Reddit, megaton00

8

5/5/4

6/5/4

+1

Accept

(same as #6, poster/talk unknown)

Reddit, Virtual_Plum121

9

5/3/2

4/4/2

mixed

Reject

Rebuttal didn't save it, "incrementality" issue

Reddit, realogog

10

5/4/3

-

-

Accept

Community optimism for "5-4-3 is achievable"

Reddit, felolorocher

11

4/4/2

4/4/3

+1

Accept

AC fought for the paper, luck matters!

Reddit, Few_Refrigerator8308

12

4/3/4

4/4/5

+1

Accept

Lucky with AC

Reddit, Ok-Internet-196

13

5/3/3

4/3/3

-1 (from 5 to 4)

Reject

Reviewer simply wrote "I read the rebuttals and updated my score."

Reddit, chethankodase

14

5/4/1

6/6/1

+1/+2

Reject

"The reviewer had a strong personal bias, but the ACs were not convinced"

Reddit, ted91512

15

5/3/3

6/5/4

+1/+2

Accept

"Accepted, happy ending"

Reddit, ridingabuffalo58

16

6/5/4

6/6/4

+1

Accept

"Accepted but not sure if poster/oral"

Reddit, InstantBuffoonery

17

6/3/2

-

None

Reject

"Strong accept signals" still not enough

Reddit, impatiens-capensis

18

5/5/2

5/5/3

+1

Accept

"Reject was against the principle of our work"

Reddit, SantaSoul

19

6/4/4

6/6/4

+2

Accept

Community support for strong scores

Reddit, curious_mortal

20

4/4/2

6/4/2

+2

Accept

AC considered report about reviewer bias

Reddit, DuranRafid

21

3/4/6

3/4/6

None

Reject

BR reviewer didn't submit final, AC rejected

Reddit, Fluff269

22

355

555

+2

Accept

"Any chance for oral?"

Reddit, Beginning-Youth-6369

23

5/3/2

-

-

TBD

"Had a good rebuttal, let's see!"

Reddit, temporal_guy

24

4/3/4

-

-

TBD

"Waiting for good results!"

Reddit, Ok-Internet-196

25

5/5/4

5/5/4

None

Accept

"555 we fn did it boys"

Reddit, lifex_

26

633

554

-

Accept

"Here we go Hawaii♡"

Reddit, DriveOdd5983

27

554

555

+1

Accept

"Many thanks to AC"

Reddit, GuessAIDoesTheTrick

28

345

545

+2

Accept

"My first Accept!"

Reddit, Fantastic_Bedroom170

29

4/4/2

232

-2, -2

Reject

"Reviewers praised the paper, but still rejected"

Reddit, upthread

30

5/4/4

5/4/4

None

Reject

"Another 5/4/4 reject here!"

Reddit, kjunhot

31

432

432

None

TBD

"432 with hope"

Zhihu, 泡泡鱼

32

444

444

None

Accept

"3 borderline accepts, got in!"

Zhihu, 小月

33

553

555

+2

Accept

"5-score reviewer roasted the 3-score reviewer"

Zhihu, Ealice

34

554

555

+1

Accept

"Highlight downgraded to poster, but happy"

Zhihu, Frank

35

135

245

+1/+2

Reject

"Met a 'bad guy' reviewer"

Zhihu, Frank

36

235

445

+2

Accept

"Congrats co-authors!"

Zhihu, Frank

37

432

432

None

Accept

"AC appreciated explanation, saved the paper"

Zhihu, Feng Qiao

38

442

543

+1/+1

Accept

"After all, got in!"

Zhihu, 结弦

39

441

441

None

TBD

"One reviewer 'writing randomly'"

Zhihu, ppphhhttt

40

4/4/3/2

-

-

TBD

"Asked to use more datasets for generalization"

Zhihu, 随机

41

446 (443)

-

-

TBD

"Everyone changed scores last two days"

Zhihu, 877129391241

42

553

553

None

Accept

"Thanks AC for acceptance"

Zhihu, Ealice

43

4/4/3/2

-

-

Accept

"First-time submission, fair attack points"

Zhihu, 张读白

44

4/4/4

4/4/4

None

Accept

"Confident, hoping for luck"

Zhihu, hellobug

45

5541

-

-

TBD

"Accused of copying concurrent work"

Zhihu, 凪·云抹烟霞

46

554

555

+1

Accept

"Poster, but AC downgraded highlight"

Zhihu, Frank

47

6/3/2

-

None

Reject

High initial, still rejected

Reddit, impatiens-capensis

48

432

432

None

Accept

"Average final 4, some hope"

Zhihu, 泡泡鱼

49

563

564

+1

Accept

"Grateful to AC!"

Zhihu, 夏影

50

6/5/4

6/6/4

+1

Accept

"Accepted, not sure if poster or oral"

Reddit, InstantBuffoonery

NOTE:

This is NOT an exhaustive list of all ICCV 2025 papers, but every real individual case reported in the Zhihu and Reddit community discussions included above.

Many entries were “update pending” at posting — when the author didn’t share the final result, marked as TBD.

Many papers changed hands between accept/reject on details like one reviewer not updating, AC/Meta reviewer overrides, “bad guy”/mean reviewers, and luck with batch cutoff.

🧠 ICCV 2025 Review Insights: What Did We Learn?

1. Luck Matters — Sometimes More Than Merit

Multiple papers with 5/5/3 or even 6/5/4 were rejected. Others with one weak reject (2) got in — sometimes only because the AC “fought for it.”

"Getting lucky with the reviewers is almost as important as the quality of the paper itself." (Reddit)

2. Reviewer Quality Is All Over the Place

Dozens reported short, generic, or careless reviews — sometimes 1-2 lines with major negative impact.

Multiple people accused reviewers of being AI-generated (GPT/Claude/etc.) — several ran AI detectors and reported >90% “AI-written.”

Desk rejects were sometimes triggered by reviewer irresponsibility (ICCV officially desk-rejected 29 papers for "irresponsible" reviewers).

3. Rebuttal Can Save You… Sometimes

Many cases where good rebuttals led to score increases and acceptance.

But also numerous stories where reviewers didn’t update, or even lowered scores post-rebuttal without clear reason.

4. Meta-Reviewers & ACs Wield Real Power

Several stories where ACs overruled reviewers (for both acceptance and rejection).

Meta-reviewer “mistakes” (e.g., recommend accept but click reject) — some authors appealed and got the result changed.

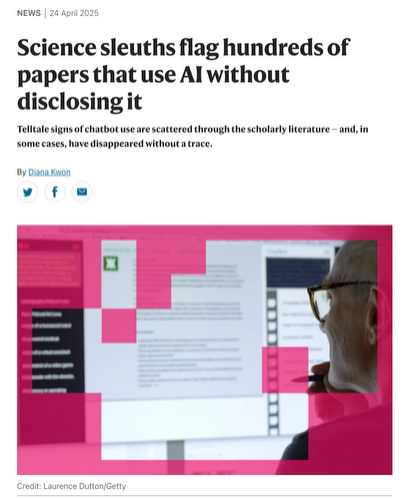

5. System Flaws and Community Frustrations

Complaints about the “review lottery”, irresponsible/underqualified reviewers, ACs ignoring rebuttal, and unfixable errors.

Many hope for peer review reform: more double-blind accountability, reviewer rating, and even rewards for good reviewing (see this arXiv paper proposing reform).

Community Quotes & Highlights

"Now I believe in luck, not just science."

— Anonymous

"Desk reject just before notification, it's a heartbreaker."

— 877129391241, Zhihu

"I got 555, we did it boys."

— lifex, Reddit

"Three ACs gave Accept, but it was still rejected — I have no words."

— 寄寄子, Zhihu

"Training loss increases inference time — is this GPT reviewing?"

— Knight, Zhihu

"Meta-review: Accept. Final Decision: Reject. Reached out, they fixed it."

— fall22_cs_throwaway, Reddit

Final Thoughts: Is ICCV Peer Review Broken?

ICCV 2025 gave us a microcosm of everything good and bad about large-scale peer review: scientific excellence, reviewer burnout, human bias, reviewer heroism, and plenty of randomness.

Takeaways:

Prepare your best work, but steel yourself for randomness.

Test early on https://review.cspaper.org before and after submission to help build reasonable expectation

Craft a strong, detailed rebuttal — sometimes it works miracles.

If you sense real injustice, appeal or contact your AC, but don’t count on it.

Above all: Don’t take a single decision as a final judgment of your science, your skill, or your future.

Join the Conversation!

What was YOUR ICCV 2025 review experience?

Did you spot AI-generated reviews? Did a miracle rebuttal save your work?

Is the peer review crisis fixable, or are we doomed to reviewer roulette forever?

“Always hoping for the best! But worse case scenario, one can go for a Workshop with a Proceedings Track!”

— Reddit

[image: peerreview-nickkim.jpg]

Let’s keep pushing for better science — and a better system.

If you find this article helpful, insightful, or just painfully relatable, upvote and share with your fellow researchers. The struggle is real, and you are not alone!

3

3

3

3

6

6